Crawlkit

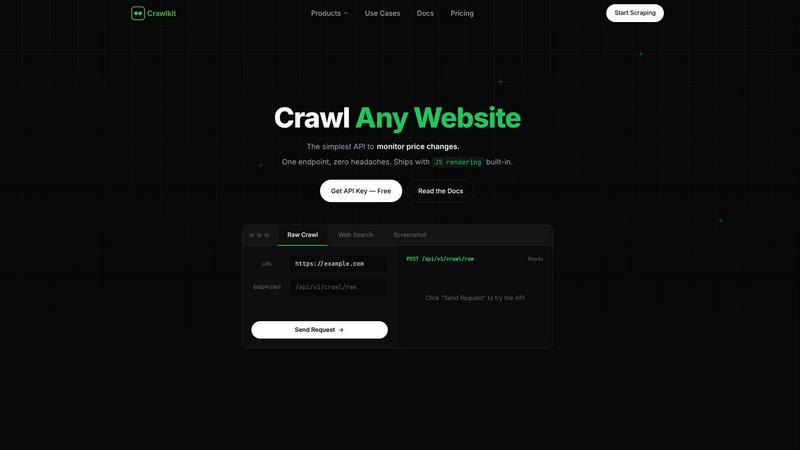

CrawlKit simplifies data extraction by turning any website into a structured API with a single request.

Visit

About Crawlkit

CrawlKit is an advanced web data extraction platform designed specifically for developers and data teams who require reliable and scalable access to web data. Its primary value proposition lies in simplifying the web scraping process, which often presents challenges such as managing rotating proxies, headless browsers, and anti-bot measures. With CrawlKit, users can bypass the intricacies of building and maintaining their own scraping infrastructure. The platform provides a straightforward API, allowing users to send data requests while CrawlKit takes care of the complex tasks of proxy rotation, browser rendering, and handling rate limits. This enables developers and data professionals to focus on utilizing the extracted data for analysis and decision-making. With capabilities to extract diverse types of web data, including raw page content, search results, and professional data from platforms like LinkedIn, CrawlKit is essential for anyone needing efficient web data extraction solutions.

Features of Crawlkit

Simplified API Access

CrawlKit offers a user-friendly API that allows users to make data requests without needing to understand the underlying complexities of web scraping. This feature empowers developers to quickly integrate data extraction into their applications with minimal setup.

Comprehensive Data Sources

With CrawlKit, users can extract structured data from a wide range of sources including websites, social media platforms like LinkedIn and Instagram, and app stores. This versatility means that users can obtain all the data they need through a single API call.

Reliable Data Extraction

CrawlKit ensures that users receive clean, complete data by waiting for full page loads and validating responses before delivering results. This commitment to quality means that users can trust the accuracy and integrity of the data they extract.

Transparent Pricing Model

CrawlKit adopts a credit-based pricing system that is straightforward and easy to understand. Users know exactly what they will pay without hidden fees or unexpected charges, allowing for better budgeting and planning of their data extraction needs.

Use Cases of Crawlkit

CRM Enrichment

CrawlKit can enhance customer relationship management (CRM) systems by automatically pulling LinkedIn profile data. This feature enables businesses to enrich their leads with job titles, company information, and contact details, streamlining the lead generation process.

Competitor Monitoring

Data teams can use CrawlKit to track competitors' social media performance, particularly on platforms like Instagram. By monitoring follower counts, engagement rates, and post performance, businesses can gain insights into their competitors' strategies and adjust their own accordingly.

App Review Analysis

CrawlKit allows users to extract and analyze reviews from app stores. This functionality aids in understanding customer sentiment and feedback, enabling companies to improve their products based on user insights and trends.

Market Research

CrawlKit's capabilities support comprehensive market research by enabling users to gather data from various online sources. By extracting structured data, businesses can analyze market trends, consumer behavior, and competitive landscapes effectively.

Frequently Asked Questions

What types of data can I extract using Crawlkit?

CrawlKit allows users to extract a variety of data types, including raw page content, structured data from social media platforms like LinkedIn and Instagram, as well as app store details and reviews.

Is there a limit to the number of requests I can make?

CrawlKit operates on a credit-based system with no monthly commitments or rate limits. Users can purchase credits as needed, making it flexible for varying data extraction needs.

How does Crawlkit handle website changes or blocking mechanisms?

CrawlKit is designed to manage changes in website structures and blocking mechanisms. It includes features like proxy rotation and browser rendering to ensure that users receive valid and complete data even when websites implement anti-scraping measures.

Can I integrate Crawlkit with other tools or platforms?

Yes, CrawlKit is a simple HTTP API that can be used with any programming language or automation tool. This compatibility ensures that users can integrate it seamlessly into their existing workflows without any restrictions.

Explore more in this category:

Top Alternatives to Crawlkit

TrafficClaw

Talk to your SEO & Analytics data - it finally talks back

Fusedash

Fusedash transforms raw data into intuitive dashboards and charts, enabling your team to act on insights swiftly.

Idearium

Idearium builds memorable websites that help your business grow online.

Linkfinder AI

LinkFinder AI enriches your leads with accurate company details like emails, websites, and LinkedIn profiles in minutes.

BlitzAPI

BlitzAPI provides instant access to verified B2B data through powerful APIs, enhancing your growth team's strategies.

echoloc

Echoloc finds companies ready to buy by analyzing the hiring signals hidden in their job postings.

FilexHost

Effortlessly host and share any file with instant links, secure storage, and built-in viewers for a seamless experience.

GrowPanel

GrowPanel delivers real-time subscription analytics, empowering SaaS businesses to track vital metrics and optimize.