Mod vs OpenMark AI

Side-by-side comparison to help you choose the right product.

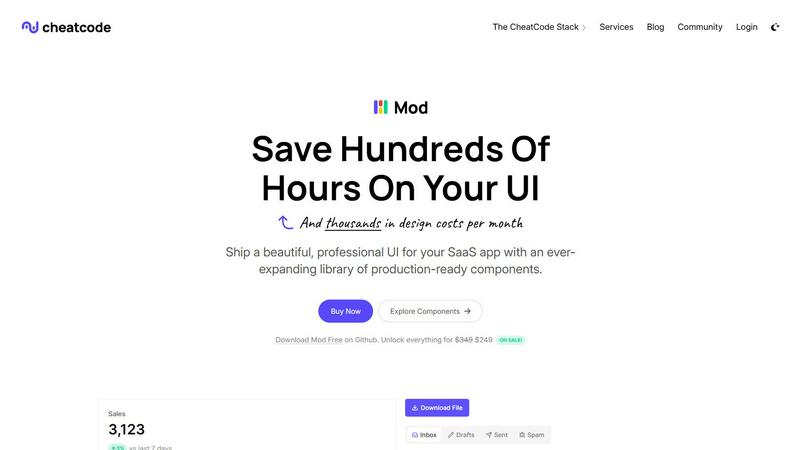

Mod is a CSS framework with ready-made components to build SaaS interfaces quickly and correctly.

OpenMark AI benchmarks over 100 language models on your specific task to find the best one for cost, speed, and quality.

Last updated: March 26, 2026

Visual Comparison

Mod

OpenMark AI

Feature Comparison

Mod

Extensive Component Library

Mod provides over 88 ready-to-use UI components that cover the essential building blocks of any SaaS application. This includes everything from basic form inputs, buttons, and alerts to complex data tables, dashboards, and navigation systems. Each component is crafted with functionality and aesthetics in mind, following modern design principles. This library allows developers to assemble interfaces rapidly by simply copying and pasting code, rather than building and styling each element from scratch, which ensures consistency and saves countless hours of development time.

Framework-Agnostic Design

A key strength of Mod is its complete independence from any specific JavaScript framework or backend technology. The components are built with standard, semantic HTML and are styled with pure CSS. This means they integrate seamlessly with popular front-end frameworks like Next.js, Nuxt, Vite, and Svelte, as well as backend-driven views in Rails or Django. This flexibility ensures that developers are not locked into a particular tech stack and can adopt Mod into their existing projects without friction or major rewrites.

Complete Design System with Themes

Beyond individual components, Mod offers a full design system with 168 distinct style utilities, two comprehensive themes (light and dark), and support for over 1,500 icons. This system provides a cohesive visual language for spacing, color, typography, and more. The built-in dark mode support is effortless to implement, enhancing user experience. Having this level of design consistency pre-defined ensures that every part of your application looks professionally coordinated, eliminating the guesswork from styling decisions.

Responsive & Mobile-First Architecture

Every component and style in Mod is built with a mobile-first approach. This foundational principle means the default styles are designed for small screens, with scaling adjustments (using CSS media queries) for tablets and desktops. This guarantees that the SaaS applications you build are inherently responsive and provide an optimal user experience across all device sizes. Developers do not need to write complex, custom CSS for responsiveness, as it is baked directly into the framework's core.

OpenMark AI

Plain Language Task Description

You do not need to write complex code or structured prompts to begin benchmarking. OpenMark AI operates on a simple, foundational principle: describe what you want the AI to do in your own words. The platform interprets your intent, whether it's "classify customer emails by sentiment" or "extract dates and names from legal documents," and constructs the necessary tests. This removes the technical barrier, allowing product managers and developers to focus on the task's objective rather than the intricacies of prompt engineering for multiple APIs.

Multi-Model Comparison in One Session

The platform enables you to test the same prompt or task against dozens of different LLMs simultaneously. This is a core differentiator from manual testing, where you would have to run separate, sequential calls to each model's API. With OpenMark AI, you launch one benchmark job and receive a unified results dashboard. This side-by-side comparison is essential for a clear, apples-to-apples evaluation of performance, putting models from different providers on an equal footing based on your specific criteria.

Holistic Performance Metrics

OpenMark AI moves beyond simple accuracy or speed. It provides a complete picture of model suitability by measuring four key dimensions: the quality of the output (scored against your task), the real cost per API request, the latency (response time), and the stability of the model across multiple repeat runs. Seeing the variance in outputs is critical; it tells you if a model is dependable or if its first successful response was a fluke. This holistic view is what allows for true cost-efficiency analysis—finding the best quality relative to what you pay.

Hosted Benchmarking with Credits

To simplify access and comparison, OpenMark AI uses a credit-based system. You do not need to source, configure, and manage separate API keys and accounts for every model provider you wish to test. This eliminates significant setup overhead and billing complexity. You purchase credits through OpenMark AI, and the platform handles all the backend API calls to its supported catalog of models. This foundational approach makes large-scale benchmarking accessible and manageable for teams of any size.

Use Cases

Mod

Rapid Prototyping and MVP Development

For entrepreneurs and solo developers validating a business idea, speed is critical. Mod is perfectly suited for building a Minimum Viable Product (MVP) quickly. By leveraging the pre-designed components and layouts, a developer can construct a fully functional, credible-looking prototype in days instead of weeks. This allows for faster user testing, feedback collection, and iteration without significant upfront investment in custom UI/UX design.

Standardizing UI Across Development Teams

In growing teams, maintaining a consistent look and feel across different features and modules can be challenging. Mod acts as a single source of truth for the UI. By adopting Mod as the base design system, teams ensure that all developers are using the same components, spacing, and colors. This standardization reduces design debt, streamlines code reviews, and makes onboarding new developers easier, as they can immediately work with a familiar, documented set of UI elements.

Enhancing Legacy Applications

Modernizing the user interface of an older, functional application can be a daunting task. Mod provides a straightforward path to a UI refresh without a complete front-end rewrite. Because it is framework-agnostic, developers can incrementally replace outdated components and styles with Mod's modern equivalents. This allows for a gradual, low-risk improvement of the application's aesthetics and usability, bringing it up to current standards without disrupting core functionality.

Building Internal Tools and Admin Panels

Internal dashboards, admin panels, and operational tools often do not justify a large design budget but still require clarity, functionality, and a professional appearance. Mod is an ideal solution for these projects. Its comprehensive component set includes many data-display elements like charts, tables, and stats cards that are essential for admin interfaces. Teams can build powerful, intuitive internal tools rapidly, ensuring efficiency for their operators without the overhead of a custom design process.

OpenMark AI

Validating a Model Before Feature Shipment

A development team has built a new AI-powered feature, such as an automated support ticket categorizer. Before launching it to real users, they use OpenMark AI to validate their chosen model. They describe the categorization task, run it against several potential models, and compare not just accuracy but also cost and response consistency. This ensures they deploy the most reliable and cost-effective model from day one, preventing poor user experiences and unexpected API bills.

Choosing Between Models for a Specific Workflow

A product manager needs to select an LLM for a new data extraction pipeline that processes research papers. They have a shortlist from various vendors. Using OpenMark AI, they create a benchmark using sample paragraphs from their domain. The results clearly show which model provides the most accurate and consistent entity extraction at a sustainable cost per document, providing a data-driven foundation for the procurement and technical implementation decision.

Testing Model Consistency and Stability

A developer notices that their current AI integration occasionally produces bizarre or off-topic responses, though it often works well. They use OpenMark AI's repeat-run capability to execute the same prompt multiple times for several candidate replacement models. The variance analysis in the results immediately highlights which models produce stable, predictable outputs every time and which ones suffer from the same inconsistency, guiding them toward a more robust solution.

Cost-Efficiency Analysis for Scaling Applications

An engineering lead is planning to scale an existing AI chat feature from hundreds to hundreds of thousands of users. They use OpenMark AI to benchmark their current model against newer, potentially cheaper alternatives. By comparing the real API cost per request alongside the quality scores for their specific conversation patterns, they can calculate the total cost of ownership at scale and make a strategic decision that balances budget with performance.

Overview

About Mod

Mod is a comprehensive CSS framework and component library designed specifically for building modern, polished Software-as-a-Service (SaaS) user interfaces. At its core, Mod provides a foundational design system that allows developers to move quickly from concept to a fully functional, professional-looking application without the need for extensive custom design work. It is built with a mobile-first, responsive philosophy, ensuring that applications look and function perfectly on any device, from smartphones to desktops. The library includes a vast collection of pre-built, accessible components like buttons, forms, modals, and navigation bars, all styled cohesively. This eliminates the common bottleneck of UI design and front-end styling, enabling solo developers and teams to focus their energy on application logic and unique features. By offering a consistent, high-quality visual foundation, Mod dramatically reduces development time and design costs, making it an essential tool for anyone aiming to ship robust SaaS products efficiently.

About OpenMark AI

OpenMark AI is a fundamental tool designed to solve a critical problem in modern software development: choosing the right large language model (LLM) for a specific task. It is a web application for task-level LLM benchmarking. Instead of relying on marketing claims or generic leaderboards, OpenMark AI allows developers and product teams to test models based on their actual, unique needs. You simply describe the task you want to perform in plain language, such as data extraction, translation, or question answering. The platform then runs your prompts against a wide catalog of models in a single session, using real API calls. The core value lies in the comprehensive comparison it provides. You see not just a single output, but side-by-side results for cost per request, latency, scored quality, and, crucially, stability across repeat runs. This shows variance, revealing which models are consistently reliable versus those that just got lucky once. By using a hosted credit system, it eliminates the complex setup of managing multiple API keys from providers like OpenAI, Anthropic, or Google. OpenMark AI is built for making informed, pre-deployment decisions, ensuring you select a model that offers the best balance of quality, cost-efficiency, and consistency for your specific workflow before you ship an AI feature to users.

Frequently Asked Questions

Mod FAQ

What does "framework-agnostic" mean?

Framework-agnostic means that Mod is not built for or dependent on a single JavaScript framework like React or Vue. Instead, its components are delivered as plain HTML and CSS code snippets. You can paste this HTML structure into your project's template files—whether they are JSX for React, .vue files for Vue, ERB templates for Rails, or plain HTML—and then link to Mod's CSS file. The styles will apply correctly, making it compatible with virtually any web technology stack.

How does Mod handle customization and branding?

Mod is designed to be a solid foundation that you can customize to match your brand identity. The framework uses CSS custom properties (variables) for core design tokens like colors, fonts, and spacing. By overriding these variables in your own stylesheet, you can globally change the primary color, font family, or border radius across all components. For more specific changes, you can add your own utility classes or CSS rules to modify any component's appearance without breaking the core functionality.

Is Mod suitable for large-scale, enterprise applications?

Yes, Mod is built to scale. The component library covers a wide range of UI needs found in complex enterprise SaaS products. Its use of semantic HTML and focus on accessibility provides a strong, maintainable foundation. The organized design system and consistent coding patterns make it easier for large teams to collaborate and for the codebase to remain manageable as the application grows. It reduces the CSS bloat and inconsistency that often plagues large projects.

What is included in the "yearly updates" mentioned?

The yearly updates refer to ongoing maintenance and improvement of the Mod library. This typically includes adding new components, enhancing existing ones with new variants or features, updating the design system to follow modern trends, ensuring compatibility with new browser versions, and patching any bugs. This commitment to updates ensures that projects built with Mod have a long shelf life and can continue to leverage a modern, supported toolkit without the need for constant manual upgrades.

OpenMark AI FAQ

How does OpenMark AI score the quality of model outputs?

OpenMark AI scores quality based on the specific task you define. For structured tasks like classification or extraction, it can use automated checks against expected formats or answers. For more creative or open-ended tasks, the platform may guide you to review and score outputs manually, or use comparative grading. The fundamental goal is to measure how well each model's output fulfills the intent you described, providing a task-relevant quality metric beyond generic benchmarks.

Do I need my own API keys to use OpenMark AI?

No, you do not need to provide or configure any external API keys. OpenMark AI operates on a hosted credit system. You purchase credits through the platform, and it manages all the API calls to its supported catalog of models from providers like OpenAI, Anthropic, Google, and others. This is a core feature designed to remove setup complexity and allow for seamless, centralized comparison across different vendors.

What is the benefit of testing stability with repeat runs?

Testing stability by running the same prompt multiple times is crucial because LLMs can be non-deterministic, meaning they don't always give the same answer to the same question. A single successful output might be lucky. By observing variance across repeat runs, you see which models are consistently reliable for your task. This helps you avoid deploying a model that will confuse users with erratic behavior, ensuring a more dependable and professional end-user experience.

What kinds of tasks can I benchmark with OpenMark AI?

You can benchmark virtually any task you would use an LLM for. Common examples include text classification, translation, summarization, question answering, data extraction from documents, code generation, and testing responses for a Retrieval-Augmented Generation (RAG) system. The platform is built to be flexible. If you can describe the task in plain language, you can likely create a benchmark for it to find the optimal model.