Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right product.

Hostim.dev

Hostim.dev simplifies Docker app deployment with built-in databases on reliable, GDPR-compliant EU infrastructure.

Last updated: March 1, 2026

OpenMark AI benchmarks over 100 language models on your specific task to find the best one for cost, speed, and quality.

Last updated: March 26, 2026

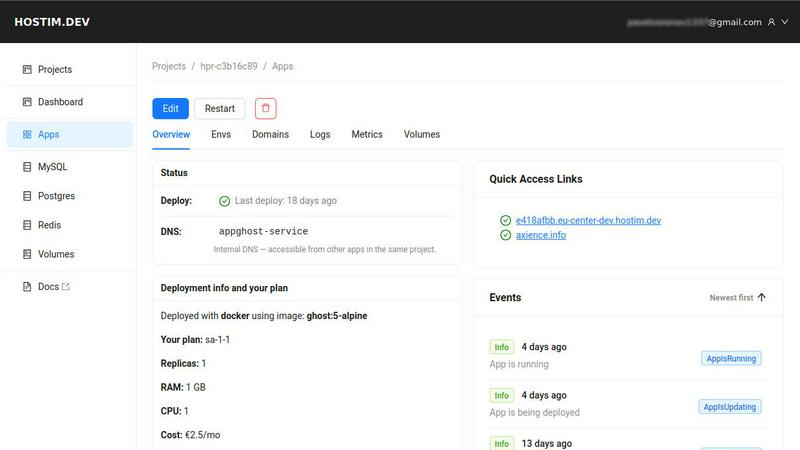

Visual Comparison

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Easy Deployment with Docker and Git

Hostim.dev simplifies the deployment process by allowing users to deploy applications using Docker images, Git repositories, or Docker Compose files. This feature eliminates the need for extensive DevOps knowledge, enabling developers to go live with their projects in minutes.

Built-in Databases and Storage

The platform offers built-in MySQL, Postgres, Redis, and volume storage, making it easy for developers to provision databases instantly. All essential services are pre-wired and ready to use, which significantly reduces setup time and complexity.

Real-time Monitoring and Security

Hostim.dev ensures security and observability by automatically implementing HTTPS, providing live logs, and offering metrics for performance tracking. Each project is isolated in its own environment, enhancing security and allowing developers to maintain a clean workspace.

Predictable and Transparent Pricing

With pricing starting as low as €2.50 per month, Hostim.dev provides a straightforward billing model with no hidden fees. This transparency allows freelancers and agencies to quote costs to clients confidently, simplifying financial planning and project management.

OpenMark AI

Plain Language Task Description

You do not need to write complex code or structured prompts to begin benchmarking. OpenMark AI operates on a simple, foundational principle: describe what you want the AI to do in your own words. The platform interprets your intent, whether it's "classify customer emails by sentiment" or "extract dates and names from legal documents," and constructs the necessary tests. This removes the technical barrier, allowing product managers and developers to focus on the task's objective rather than the intricacies of prompt engineering for multiple APIs.

Multi-Model Comparison in One Session

The platform enables you to test the same prompt or task against dozens of different LLMs simultaneously. This is a core differentiator from manual testing, where you would have to run separate, sequential calls to each model's API. With OpenMark AI, you launch one benchmark job and receive a unified results dashboard. This side-by-side comparison is essential for a clear, apples-to-apples evaluation of performance, putting models from different providers on an equal footing based on your specific criteria.

Holistic Performance Metrics

OpenMark AI moves beyond simple accuracy or speed. It provides a complete picture of model suitability by measuring four key dimensions: the quality of the output (scored against your task), the real cost per API request, the latency (response time), and the stability of the model across multiple repeat runs. Seeing the variance in outputs is critical; it tells you if a model is dependable or if its first successful response was a fluke. This holistic view is what allows for true cost-efficiency analysis—finding the best quality relative to what you pay.

Hosted Benchmarking with Credits

To simplify access and comparison, OpenMark AI uses a credit-based system. You do not need to source, configure, and manage separate API keys and accounts for every model provider you wish to test. This eliminates significant setup overhead and billing complexity. You purchase credits through OpenMark AI, and the platform handles all the backend API calls to its supported catalog of models. This foundational approach makes large-scale benchmarking accessible and manageable for teams of any size.

Use Cases

Hostim.dev

Freelancers Managing Client Projects

Freelancers can use Hostim.dev to deploy client applications quickly and manage costs effectively. The platform's per-project billing feature allows for seamless handover to clients, ensuring they receive a clear understanding of the costs involved.

Agencies Handling Multiple Clients

Agencies benefit from the ability to isolate client projects within separate Kubernetes namespaces. This feature enables them to maintain control over costs and manage resources efficiently, ensuring that each client's project remains distinct and secure.

Students Learning Real-World Skills

Students can take advantage of Hostim.dev to gain hands-on experience with real infrastructure. The platform's free trial and student credits provide an opportunity to deploy Docker applications, databases, and other essential services that can enhance their portfolios.

Startups Releasing MVPs

Startups can leverage Hostim.dev to rapidly deploy their minimum viable products (MVPs) without the burden of complex infrastructure management. The platform's quick deployment capabilities and flat pricing structure allow startups to focus on product development and market entry.

OpenMark AI

Validating a Model Before Feature Shipment

A development team has built a new AI-powered feature, such as an automated support ticket categorizer. Before launching it to real users, they use OpenMark AI to validate their chosen model. They describe the categorization task, run it against several potential models, and compare not just accuracy but also cost and response consistency. This ensures they deploy the most reliable and cost-effective model from day one, preventing poor user experiences and unexpected API bills.

Choosing Between Models for a Specific Workflow

A product manager needs to select an LLM for a new data extraction pipeline that processes research papers. They have a shortlist from various vendors. Using OpenMark AI, they create a benchmark using sample paragraphs from their domain. The results clearly show which model provides the most accurate and consistent entity extraction at a sustainable cost per document, providing a data-driven foundation for the procurement and technical implementation decision.

Testing Model Consistency and Stability

A developer notices that their current AI integration occasionally produces bizarre or off-topic responses, though it often works well. They use OpenMark AI's repeat-run capability to execute the same prompt multiple times for several candidate replacement models. The variance analysis in the results immediately highlights which models produce stable, predictable outputs every time and which ones suffer from the same inconsistency, guiding them toward a more robust solution.

Cost-Efficiency Analysis for Scaling Applications

An engineering lead is planning to scale an existing AI chat feature from hundreds to hundreds of thousands of users. They use OpenMark AI to benchmark their current model against newer, potentially cheaper alternatives. By comparing the real API cost per request alongside the quality scores for their specific conversation patterns, they can calculate the total cost of ownership at scale and make a strategic decision that balances budget with performance.

Overview

About Hostim.dev

Hostim.dev is a bare-metal Platform-as-a-Service (PaaS) designed to streamline the deployment and management of containerized applications. It empowers developers by removing the complexities associated with traditional infrastructure management, allowing them to focus solely on their code. With Hostim.dev, users can leverage familiar tools such as Docker and Git to transition their applications from development to production with remarkable speed and ease. The platform automates numerous backend processes, including the provisioning of essential services like databases and storage, and it includes important security features like automatic HTTPS. Each project benefits from its own isolated Kubernetes namespace, ensuring a clean and secure environment for development. Operating on bare-metal servers located in Germany, Hostim.dev guarantees GDPR-compliant hosting, making it a suitable choice for developers who prioritize data protection. The core value proposition lies in its ability to combine the flexibility of containerization with the simplicity of a managed environment, offering flat, predictable pricing that eliminates the unpleasant surprises often associated with larger cloud providers. Ultimately, Hostim.dev serves as a powerful tool that allows developers to concentrate on building their applications rather than managing the underlying infrastructure.

About OpenMark AI

OpenMark AI is a fundamental tool designed to solve a critical problem in modern software development: choosing the right large language model (LLM) for a specific task. It is a web application for task-level LLM benchmarking. Instead of relying on marketing claims or generic leaderboards, OpenMark AI allows developers and product teams to test models based on their actual, unique needs. You simply describe the task you want to perform in plain language, such as data extraction, translation, or question answering. The platform then runs your prompts against a wide catalog of models in a single session, using real API calls. The core value lies in the comprehensive comparison it provides. You see not just a single output, but side-by-side results for cost per request, latency, scored quality, and, crucially, stability across repeat runs. This shows variance, revealing which models are consistently reliable versus those that just got lucky once. By using a hosted credit system, it eliminates the complex setup of managing multiple API keys from providers like OpenAI, Anthropic, or Google. OpenMark AI is built for making informed, pre-deployment decisions, ensuring you select a model that offers the best balance of quality, cost-efficiency, and consistency for your specific workflow before you ship an AI feature to users.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

The free tier of Hostim.dev includes a 5-day trial with full access to deploy any Docker image, Git repository, or Docker Compose file. It allows users to explore the platform without any credit card requirement, making it ideal for evaluation purposes.

Can I deploy with just a Compose file?

Yes, Hostim.dev supports deployment using just a Docker Compose file. Users can easily paste their Compose file, and the platform will handle the rest, allowing for a quick and straightforward deployment process.

Where is my app hosted?

All applications hosted on Hostim.dev are deployed on bare-metal servers located in Germany. This ensures compliance with GDPR regulations and guarantees that data remains within the European Union.

Do I need to know Kubernetes?

No, users do not need to have prior knowledge of Kubernetes to use Hostim.dev. The platform abstracts the complexities of Kubernetes management, allowing developers to focus on their applications rather than the underlying infrastructure.

OpenMark AI FAQ

How does OpenMark AI score the quality of model outputs?

OpenMark AI scores quality based on the specific task you define. For structured tasks like classification or extraction, it can use automated checks against expected formats or answers. For more creative or open-ended tasks, the platform may guide you to review and score outputs manually, or use comparative grading. The fundamental goal is to measure how well each model's output fulfills the intent you described, providing a task-relevant quality metric beyond generic benchmarks.

Do I need my own API keys to use OpenMark AI?

No, you do not need to provide or configure any external API keys. OpenMark AI operates on a hosted credit system. You purchase credits through the platform, and it manages all the API calls to its supported catalog of models from providers like OpenAI, Anthropic, Google, and others. This is a core feature designed to remove setup complexity and allow for seamless, centralized comparison across different vendors.

What is the benefit of testing stability with repeat runs?

Testing stability by running the same prompt multiple times is crucial because LLMs can be non-deterministic, meaning they don't always give the same answer to the same question. A single successful output might be lucky. By observing variance across repeat runs, you see which models are consistently reliable for your task. This helps you avoid deploying a model that will confuse users with erratic behavior, ensuring a more dependable and professional end-user experience.

What kinds of tasks can I benchmark with OpenMark AI?

You can benchmark virtually any task you would use an LLM for. Common examples include text classification, translation, summarization, question answering, data extraction from documents, code generation, and testing responses for a Retrieval-Augmented Generation (RAG) system. The platform is built to be flexible. If you can describe the task in plain language, you can likely create a benchmark for it to find the optimal model.

Alternatives

Hostim.dev Alternatives

Hostim.dev is a bare-metal Platform-as-a-Service (PaaS) designed to provide developers with a straightforward way to deploy Docker applications on EU-hosted infrastructure. It focuses on simplifying the deployment process while ensuring that developers retain control over their applications and the environment they run in. Many users seek alternatives to Hostim.dev due to varying needs related to pricing, specific features, or preferred cloud platforms. Understanding what to look for when selecting an alternative is essential; users should consider aspects such as ease of use, the extent of automation, compliance with regulations, and available integrations that align with their existing workflows. When evaluating alternatives, it’s also important to assess the level of support provided, the scalability of the platform, and the overall performance of the services offered. Users should prioritize platforms that minimize the complexity of deployment and infrastructure management while maximizing security and reliability. Ultimately, the right alternative should empower developers to focus on building their applications rather than getting bogged down by infrastructure challenges.

OpenMark AI Alternatives

OpenMark AI is a developer tool for task-level benchmarking of large language models. It allows teams to test many LLMs simultaneously on their specific use case, comparing real-world metrics like cost, latency, output quality, and stability. This helps in making informed, pre-deployment decisions about which model to integrate into a product. Users may explore alternatives for various reasons. Some might seek different pricing structures or free tiers with more generous limits. Others may require features like on-premises deployment, integration with specific development environments, or support for a different set of models not covered by the current catalog. When evaluating other options, consider the core need: objective, apples-to-apples comparison. Look for tools that test with real API calls, provide metrics beyond just speed and cost, and offer insights into output consistency. The goal is to find a solution that delivers actionable data to confidently select the right model for your workflow and budget.