Fallom vs Hostim.dev

Side-by-side comparison to help you choose the right product.

Fallom provides real-time observability for tracking and debugging your LLM and AI agent operations.

Last updated: February 28, 2026

Hostim.dev

Hostim.dev simplifies Docker app deployment with built-in databases on reliable, GDPR-compliant EU infrastructure.

Last updated: March 1, 2026

Visual Comparison

Fallom

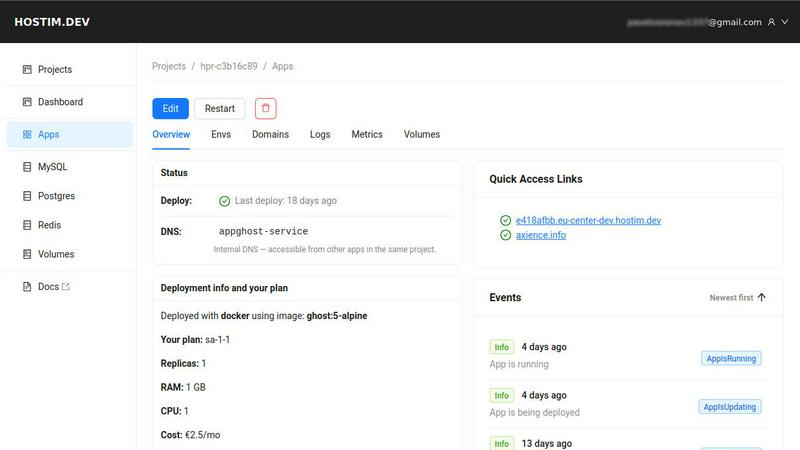

Hostim.dev

Feature Comparison

Fallom

End-to-End LLM Tracing

Fallom provides complete, granular tracing for every interaction with large language models. This means you can see the full sequence of events for any AI task, from the initial user prompt, through intermediate reasoning steps and tool calls, to the final response. Each trace includes the raw input and output, the specific model used, token counts, latency metrics, and the calculated cost. This level of detail is the basic building block for understanding how your AI applications behave in the real world, making debugging and optimization possible.

Real-Time Monitoring Dashboard

The platform offers a live dashboard that displays all LLM calls as they happen in production. You can monitor activity in real time, watching traces for different models, users, or sessions stream in. This dashboard allows you to see key metrics at a glance, such as request volume, average latency, and error rates. By providing a live view of your system's health, it enables teams to spot anomalies, performance degradation, or unexpected cost spikes immediately, facilitating faster incident response.

Cost Attribution and Analysis

A fundamental aspect of managing AI applications is understanding and controlling expenses. Fallom automatically attributes costs to their source. You can break down spending by AI model, by individual user or customer, by internal team, or by specific feature. This transparent cost tracking is essential for accurate budgeting, internal chargebacks, and identifying inefficient or expensive patterns in your LLM usage, helping you make informed decisions about model selection and optimization.

Compliance and Audit Readiness

For enterprises operating in regulated industries, Fallom is built with compliance as a core feature. It maintains complete, immutable audit trails of every LLM interaction, supporting requirements for standards like SOC 2, GDPR, and the EU AI Act. Features include detailed input/output logging, model version tracking, user consent recording, and session-level context. This ensures you have a verifiable record of your AI's operations for security reviews, regulatory audits, and internal governance.

Hostim.dev

Easy Deployment with Docker and Git

Hostim.dev simplifies the deployment process by allowing users to deploy applications using Docker images, Git repositories, or Docker Compose files. This feature eliminates the need for extensive DevOps knowledge, enabling developers to go live with their projects in minutes.

Built-in Databases and Storage

The platform offers built-in MySQL, Postgres, Redis, and volume storage, making it easy for developers to provision databases instantly. All essential services are pre-wired and ready to use, which significantly reduces setup time and complexity.

Real-time Monitoring and Security

Hostim.dev ensures security and observability by automatically implementing HTTPS, providing live logs, and offering metrics for performance tracking. Each project is isolated in its own environment, enhancing security and allowing developers to maintain a clean workspace.

Predictable and Transparent Pricing

With pricing starting as low as €2.50 per month, Hostim.dev provides a straightforward billing model with no hidden fees. This transparency allows freelancers and agencies to quote costs to clients confidently, simplifying financial planning and project management.

Use Cases

Fallom

Debugging and Improving AI Agent Workflows

When a complex AI agent that uses multiple tools and LLM calls fails or behaves unexpectedly, pinpointing the root cause is challenging. Fallom's tracing allows developers to replay the exact sequence of steps, examine the prompts and responses at each stage, and view the arguments and results of every tool call. This visibility turns debugging from a guessing game into a systematic process, drastically reducing the time to resolve issues and improve agent reliability.

Managing and Optimizing AI Operational Costs

As AI applications scale, costs can become unpredictable and difficult to manage. Fallom addresses this by providing clear, actionable data on where every dollar is spent. Product and engineering leads can use Fallom to identify which features or customers are the most expensive, compare the cost-performance ratio of different models like GPT-4o versus Claude, and set alerts for budget overruns. This enables proactive cost control and ensures sustainable scaling.

Ensuring Compliance and Auditability

Companies in finance, healthcare, or legal services using AI must demonstrate compliance with strict regulations. Fallom serves as a system of record for all AI activity. It automatically logs all necessary data—who used the system, what was asked, which model version answered, and what was said—creating a defensible audit trail. This is essential for passing security audits, responding to data subject requests, and proving adherence to industry regulations.

Performance Monitoring and Reliability Engineering

Site Reliability Engineering (SRE) principles apply to AI systems as well. Teams use Fallom to establish performance baselines for their LLM calls, monitor latency and error rate Service Level Objectives (SLOs), and set up alerts for degradation. The timing waterfall charts help visualize where bottlenecks occur in multi-step chains, allowing engineers to optimize slow steps and ensure a consistent, reliable user experience for AI-powered features.

Hostim.dev

Freelancers Managing Client Projects

Freelancers can use Hostim.dev to deploy client applications quickly and manage costs effectively. The platform's per-project billing feature allows for seamless handover to clients, ensuring they receive a clear understanding of the costs involved.

Agencies Handling Multiple Clients

Agencies benefit from the ability to isolate client projects within separate Kubernetes namespaces. This feature enables them to maintain control over costs and manage resources efficiently, ensuring that each client's project remains distinct and secure.

Students Learning Real-World Skills

Students can take advantage of Hostim.dev to gain hands-on experience with real infrastructure. The platform's free trial and student credits provide an opportunity to deploy Docker applications, databases, and other essential services that can enhance their portfolios.

Startups Releasing MVPs

Startups can leverage Hostim.dev to rapidly deploy their minimum viable products (MVPs) without the burden of complex infrastructure management. The platform's quick deployment capabilities and flat pricing structure allow startups to focus on product development and market entry.

Overview

About Fallom

Fallom is an AI-native observability platform built from the ground up for teams developing applications with large language models (LLMs) and AI agents. In the complex world of AI operations, traditional monitoring tools fall short. Fallom provides the fundamental visibility needed to understand, manage, and improve AI-powered systems in production. It works by automatically tracing every LLM call, capturing essential data like the exact prompts sent, the model's outputs, any tool or function calls made, token usage, latency, and per-call costs. This end-to-end tracing is the cornerstone of AI observability. The platform is designed for engineering and product teams who need to move beyond simple logging to gain actionable insights. Its core value proposition is delivering comprehensive, real-time visibility into AI workloads, enabling organizations to optimize performance, control costs, troubleshoot issues quickly, and maintain compliance with enterprise and regulatory standards. With its OpenTelemetry-native SDK, integrating Fallom is a straightforward process, allowing teams to start tracing their applications in minutes and establish a foundational layer of observability for their AI initiatives.

About Hostim.dev

Hostim.dev is a bare-metal Platform-as-a-Service (PaaS) designed to streamline the deployment and management of containerized applications. It empowers developers by removing the complexities associated with traditional infrastructure management, allowing them to focus solely on their code. With Hostim.dev, users can leverage familiar tools such as Docker and Git to transition their applications from development to production with remarkable speed and ease. The platform automates numerous backend processes, including the provisioning of essential services like databases and storage, and it includes important security features like automatic HTTPS. Each project benefits from its own isolated Kubernetes namespace, ensuring a clean and secure environment for development. Operating on bare-metal servers located in Germany, Hostim.dev guarantees GDPR-compliant hosting, making it a suitable choice for developers who prioritize data protection. The core value proposition lies in its ability to combine the flexibility of containerization with the simplicity of a managed environment, offering flat, predictable pricing that eliminates the unpleasant surprises often associated with larger cloud providers. Ultimately, Hostim.dev serves as a powerful tool that allows developers to concentrate on building their applications rather than managing the underlying infrastructure.

Frequently Asked Questions

Fallom FAQ

What is AI observability and why is it different?

AI observability is the practice of gaining deep, actionable insights into the behavior and performance of AI systems, particularly those based on LLMs. It is different from traditional application monitoring because LLMs are non-deterministic. You need to see not just if a call failed, but why it failed—was the prompt poorly constructed, did a tool call error, or did the model hallucinate? Observability provides the context of prompts, outputs, and intermediate steps necessary to answer these questions.

How difficult is it to integrate Fallom into my existing application?

Integration is designed to be straightforward. Fallom provides an OpenTelemetry-native SDK, which is the industry-standard protocol for observability. In most cases, you can instrument your application by adding a few lines of code to your LLM client initialization. The goal is to have basic tracing up and running in under five minutes, without requiring major changes to your application architecture or causing performance overhead.

Can Fallom handle sensitive or private data?

Yes. Fallom includes a Privacy Mode for handling sensitive information. This mode allows you to configure content redaction, so that specific data fields or entire prompt/response contents are not captured in the logs, while still preserving essential metadata for tracing and metrics. You can maintain full telemetry for debugging and costing without storing confidential user data, aligning with data privacy policies.

Does Fallom support all LLM providers and frameworks?

Fallom is built to be provider-agnostic. It works with all major LLM providers like OpenAI, Anthropic, Google Gemini, and open-source models. The OpenTelemetry foundation means it can integrate with any framework or custom code that makes LLM calls. This prevents vendor lock-in and ensures you can maintain a unified observability platform even if your tech stack evolves or you switch model providers.

Hostim.dev FAQ

What does the free tier include?

The free tier of Hostim.dev includes a 5-day trial with full access to deploy any Docker image, Git repository, or Docker Compose file. It allows users to explore the platform without any credit card requirement, making it ideal for evaluation purposes.

Can I deploy with just a Compose file?

Yes, Hostim.dev supports deployment using just a Docker Compose file. Users can easily paste their Compose file, and the platform will handle the rest, allowing for a quick and straightforward deployment process.

Where is my app hosted?

All applications hosted on Hostim.dev are deployed on bare-metal servers located in Germany. This ensures compliance with GDPR regulations and guarantees that data remains within the European Union.

Do I need to know Kubernetes?

No, users do not need to have prior knowledge of Kubernetes to use Hostim.dev. The platform abstracts the complexities of Kubernetes management, allowing developers to focus on their applications rather than the underlying infrastructure.

Alternatives

Fallom Alternatives

Fallom is an AI-native observability platform in the development tools category. It provides real-time monitoring and debugging specifically for large language models and AI agents in production. Users often explore alternatives for various reasons. These can include budget constraints, the need for different feature sets, or integration requirements with their existing technology stack. The specific needs of a project or organization can drive the search for a different solution. When evaluating an alternative, focus on core capabilities. Key considerations include the depth of tracing for LLM calls, transparency into costs and performance, and built-in support for compliance and audit requirements. The right tool should provide clear visibility into your AI operations.

Hostim.dev Alternatives

Hostim.dev is a bare-metal Platform-as-a-Service (PaaS) designed to provide developers with a straightforward way to deploy Docker applications on EU-hosted infrastructure. It focuses on simplifying the deployment process while ensuring that developers retain control over their applications and the environment they run in. Many users seek alternatives to Hostim.dev due to varying needs related to pricing, specific features, or preferred cloud platforms. Understanding what to look for when selecting an alternative is essential; users should consider aspects such as ease of use, the extent of automation, compliance with regulations, and available integrations that align with their existing workflows. When evaluating alternatives, it’s also important to assess the level of support provided, the scalability of the platform, and the overall performance of the services offered. Users should prioritize platforms that minimize the complexity of deployment and infrastructure management while maximizing security and reliability. Ultimately, the right alternative should empower developers to focus on building their applications rather than getting bogged down by infrastructure challenges.