diffray vs Fallom

Side-by-side comparison to help you choose the right product.

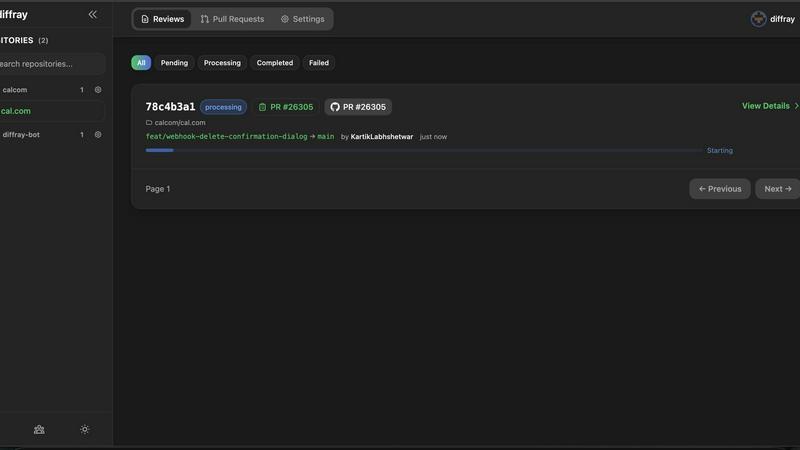

diffray

Diffray uses AI agents to catch real bugs in code reviews, not just style issues.

Last updated: February 28, 2026

Fallom provides real-time observability for tracking and debugging your LLM and AI agent operations.

Last updated: February 28, 2026

Visual Comparison

diffray

Fallom

Feature Comparison

diffray

Multi-Agent Specialized Architecture

diffray's foundational feature is its team of over 30 specialized AI agents. Unlike a single AI that tries to be good at everything, each agent is an expert in one specific domain, such as security, performance, or code style. This specialization ensures that every aspect of your code is reviewed by an entity designed specifically to find those types of issues, leading to more accurate and relevant findings than a generalized tool can provide.

Full-Context Code Analysis

diffray moves beyond simple line-by-line diff review. It analyzes pull requests by understanding the full context of the codebase. This means it can identify how new changes interact with existing code, spot potential integration issues, and recognize patterns that only become apparent when viewing the system as a whole. This contextual awareness is fundamental to providing truly insightful and actionable feedback.

Actionable and Precise Feedback

The platform is engineered to reduce noise and focus on what matters. By leveraging its team of specialized agents, diffray filters out trivial suggestions and highlights critical, high-priority issues that require developer attention. The feedback is clear, precise, and directly tied to improving code security, performance, and maintainability, allowing developers to act on it with confidence.

Comprehensive Issue Coverage

diffray provides a complete review spectrum by deploying agents across all critical software quality domains. This includes dedicated analysis for security vulnerabilities, performance anti-patterns, common bug logic, adherence to language-specific best practices, and even considerations like SEO for relevant codebases. This comprehensive coverage ensures no critical aspect of code quality is overlooked.

Fallom

End-to-End LLM Tracing

Fallom provides complete, granular tracing for every interaction with large language models. This means you can see the full sequence of events for any AI task, from the initial user prompt, through intermediate reasoning steps and tool calls, to the final response. Each trace includes the raw input and output, the specific model used, token counts, latency metrics, and the calculated cost. This level of detail is the basic building block for understanding how your AI applications behave in the real world, making debugging and optimization possible.

Real-Time Monitoring Dashboard

The platform offers a live dashboard that displays all LLM calls as they happen in production. You can monitor activity in real time, watching traces for different models, users, or sessions stream in. This dashboard allows you to see key metrics at a glance, such as request volume, average latency, and error rates. By providing a live view of your system's health, it enables teams to spot anomalies, performance degradation, or unexpected cost spikes immediately, facilitating faster incident response.

Cost Attribution and Analysis

A fundamental aspect of managing AI applications is understanding and controlling expenses. Fallom automatically attributes costs to their source. You can break down spending by AI model, by individual user or customer, by internal team, or by specific feature. This transparent cost tracking is essential for accurate budgeting, internal chargebacks, and identifying inefficient or expensive patterns in your LLM usage, helping you make informed decisions about model selection and optimization.

Compliance and Audit Readiness

For enterprises operating in regulated industries, Fallom is built with compliance as a core feature. It maintains complete, immutable audit trails of every LLM interaction, supporting requirements for standards like SOC 2, GDPR, and the EU AI Act. Features include detailed input/output logging, model version tracking, user consent recording, and session-level context. This ensures you have a verifiable record of your AI's operations for security reviews, regulatory audits, and internal governance.

Use Cases

diffray

Accelerating Pull Request Reviews

Development teams use diffray to dramatically reduce the time spent on manual code review cycles. By providing an immediate, expert-level first pass on every pull request, diffray surfaces critical issues early. This allows human reviewers to focus on higher-level architecture and logic discussions rather than basic bug-hunting, speeding up the merge process without sacrificing quality.

Enforcing Code Quality and Best Practices

Engineering leads and architects integrate diffray into their development workflow to consistently enforce coding standards and best practices across the entire team. The platform acts as an always-available, unbiased expert reviewer, ensuring that every piece of code meets organizational standards for security, performance, and style before it is even seen by a human reviewer.

Proactive Security and Performance Auditing

Organizations prioritize diffray for its deep, proactive analysis in critical areas. The specialized security agents continuously scan for vulnerabilities like injection flaws or insecure dependencies, while performance agents identify bottlenecks and inefficient patterns. This shifts security and performance left in the development lifecycle, preventing issues from reaching production.

Onboarding and Mentoring Junior Developers

diffray serves as an excellent educational tool for developers at the beginning of their careers. By providing instant, contextual feedback on code that explains not just the "what" but often the "why" behind best practices and potential pitfalls, it helps junior engineers learn and internalize high-quality coding patterns faster, accelerating their professional growth.

Fallom

Debugging and Improving AI Agent Workflows

When a complex AI agent that uses multiple tools and LLM calls fails or behaves unexpectedly, pinpointing the root cause is challenging. Fallom's tracing allows developers to replay the exact sequence of steps, examine the prompts and responses at each stage, and view the arguments and results of every tool call. This visibility turns debugging from a guessing game into a systematic process, drastically reducing the time to resolve issues and improve agent reliability.

Managing and Optimizing AI Operational Costs

As AI applications scale, costs can become unpredictable and difficult to manage. Fallom addresses this by providing clear, actionable data on where every dollar is spent. Product and engineering leads can use Fallom to identify which features or customers are the most expensive, compare the cost-performance ratio of different models like GPT-4o versus Claude, and set alerts for budget overruns. This enables proactive cost control and ensures sustainable scaling.

Ensuring Compliance and Auditability

Companies in finance, healthcare, or legal services using AI must demonstrate compliance with strict regulations. Fallom serves as a system of record for all AI activity. It automatically logs all necessary data—who used the system, what was asked, which model version answered, and what was said—creating a defensible audit trail. This is essential for passing security audits, responding to data subject requests, and proving adherence to industry regulations.

Performance Monitoring and Reliability Engineering

Site Reliability Engineering (SRE) principles apply to AI systems as well. Teams use Fallom to establish performance baselines for their LLM calls, monitor latency and error rate Service Level Objectives (SLOs), and set up alerts for degradation. The timing waterfall charts help visualize where bottlenecks occur in multi-step chains, allowing engineers to optimize slow steps and ensure a consistent, reliable user experience for AI-powered features.

Overview

About diffray

diffray is a multi-agent AI code review platform designed to fundamentally improve the software development process. It addresses the core shortcomings of traditional, single-model AI review tools, which often generate excessive noise and miss critical issues. At its heart, diffray is built on a principle of specialization. Instead of relying on one general-purpose AI, it employs a team of over 30 distinct AI agents. Each agent is a dedicated expert in a specific domain, such as security vulnerabilities, performance bottlenecks, bug patterns, best practices, or SEO considerations. This targeted, back-to-basics approach allows diffray to conduct deep, investigative analysis of pull requests. It understands not just the diff but the full context of the codebase, leading to actionable, precise feedback that developers can trust and act upon immediately. The result is a dramatic reduction in manual review time and a significant increase in the quality and reliability of code merged into production. diffray is an essential tool for individual developers seeking to improve their craft, engineering leads responsible for team output, and organizations of all sizes committed to building secure, maintainable, and high-quality software.

About Fallom

Fallom is an AI-native observability platform built from the ground up for teams developing applications with large language models (LLMs) and AI agents. In the complex world of AI operations, traditional monitoring tools fall short. Fallom provides the fundamental visibility needed to understand, manage, and improve AI-powered systems in production. It works by automatically tracing every LLM call, capturing essential data like the exact prompts sent, the model's outputs, any tool or function calls made, token usage, latency, and per-call costs. This end-to-end tracing is the cornerstone of AI observability. The platform is designed for engineering and product teams who need to move beyond simple logging to gain actionable insights. Its core value proposition is delivering comprehensive, real-time visibility into AI workloads, enabling organizations to optimize performance, control costs, troubleshoot issues quickly, and maintain compliance with enterprise and regulatory standards. With its OpenTelemetry-native SDK, integrating Fallom is a straightforward process, allowing teams to start tracing their applications in minutes and establish a foundational layer of observability for their AI initiatives.

Frequently Asked Questions

diffray FAQ

How is diffray different from other AI code review tools?

diffray is fundamentally different due to its multi-agent, specialized architecture. Most other tools use a single, general-purpose AI model to attempt all types of analysis, which can lead to generic, noisy, or incomplete feedback. diffray uses over 30 AI agents, each a domain expert, ensuring deep and precise analysis in areas like security, performance, and bugs. This results in more actionable, trustworthy, and context-aware reviews.

What programming languages and frameworks does diffray support?

diffray is designed to understand a wide array of modern programming languages and their associated frameworks. The specialized agent system allows for deep, language-specific analysis. For the most current and detailed list of supported languages and frameworks, please refer to the official diffray documentation, as this list is continually expanded based on the evolution of the software development landscape.

How does diffray handle the context of my entire codebase?

diffray does not just look at the changed lines in a pull request. It is engineered to ingest and understand the relevant context of your entire codebase. This allows its agents to analyze how new changes integrate with existing modules, identify broken dependencies, spot inconsistent patterns, and provide feedback that is meaningful within the full scope of your project, not just an isolated snippet.

Is my code secure when using diffray?

Code security is a foundational priority for diffray. The platform employs enterprise-grade security practices to protect your intellectual property. Your code is processed securely for the purpose of analysis, and diffray does not retain or use your code to train general AI models. You maintain full ownership and control of your code at all times.

Fallom FAQ

What is AI observability and why is it different?

AI observability is the practice of gaining deep, actionable insights into the behavior and performance of AI systems, particularly those based on LLMs. It is different from traditional application monitoring because LLMs are non-deterministic. You need to see not just if a call failed, but why it failed—was the prompt poorly constructed, did a tool call error, or did the model hallucinate? Observability provides the context of prompts, outputs, and intermediate steps necessary to answer these questions.

How difficult is it to integrate Fallom into my existing application?

Integration is designed to be straightforward. Fallom provides an OpenTelemetry-native SDK, which is the industry-standard protocol for observability. In most cases, you can instrument your application by adding a few lines of code to your LLM client initialization. The goal is to have basic tracing up and running in under five minutes, without requiring major changes to your application architecture or causing performance overhead.

Can Fallom handle sensitive or private data?

Yes. Fallom includes a Privacy Mode for handling sensitive information. This mode allows you to configure content redaction, so that specific data fields or entire prompt/response contents are not captured in the logs, while still preserving essential metadata for tracing and metrics. You can maintain full telemetry for debugging and costing without storing confidential user data, aligning with data privacy policies.

Does Fallom support all LLM providers and frameworks?

Fallom is built to be provider-agnostic. It works with all major LLM providers like OpenAI, Anthropic, Google Gemini, and open-source models. The OpenTelemetry foundation means it can integrate with any framework or custom code that makes LLM calls. This prevents vendor lock-in and ensures you can maintain a unified observability platform even if your tech stack evolves or you switch model providers.

Alternatives

diffray Alternatives

diffray is a specialized AI code review platform in the software development category. It employs a multi-agent architecture to conduct deep, contextual analysis of code, focusing on catching real bugs and security issues rather than superficial style points. This approach sets it apart from more generalized tools. Users may explore alternatives for various practical reasons. These can include budget constraints, the need for integration with specific development platforms or CI/CD pipelines, or a desire for different feature sets, such as more granular control over review rules or team collaboration workflows. Every development team has unique requirements and constraints. When evaluating an alternative, focus on the core principles of effective code review automation. Look for tools that provide meaningful, actionable feedback to reduce developer noise. The ability to understand code in context, not just isolated changes, is crucial for catching architectural and logic errors. Ultimately, the goal is to find a solution that genuinely improves code quality and developer velocity.

Fallom Alternatives

Fallom is an AI-native observability platform in the development tools category. It provides real-time monitoring and debugging specifically for large language models and AI agents in production. Users often explore alternatives for various reasons. These can include budget constraints, the need for different feature sets, or integration requirements with their existing technology stack. The specific needs of a project or organization can drive the search for a different solution. When evaluating an alternative, focus on core capabilities. Key considerations include the depth of tracing for LLM calls, transparency into costs and performance, and built-in support for compliance and audit requirements. The right tool should provide clear visibility into your AI operations.