Agenta vs qtrl.ai

Side-by-side comparison to help you choose the right product.

Agenta centralizes prompt management and evaluation, enabling reliable LLM development through structured collaboration.

Last updated: March 1, 2026

qtrl.ai

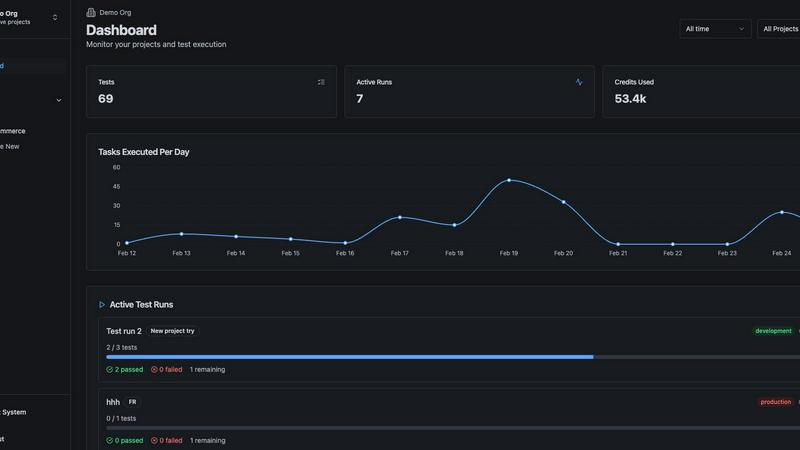

qtrl.ai helps QA teams scale testing with AI agents while maintaining full control and governance.

Last updated: March 4, 2026

Visual Comparison

Agenta

qtrl.ai

Feature Comparison

Agenta

Centralized Prompt Management

Agenta allows teams to centralize their prompts, evaluations, and traces in one comprehensive platform. This eliminates the disorganization often found in scattered tools like Slack and Google Sheets, enabling seamless collaboration among team members.

Automated Evaluation System

The platform features an automated evaluation system that replaces guesswork with evidence-based insights. Teams can systematically run experiments, track results, and validate every change, creating a reliable foundation for decision-making.

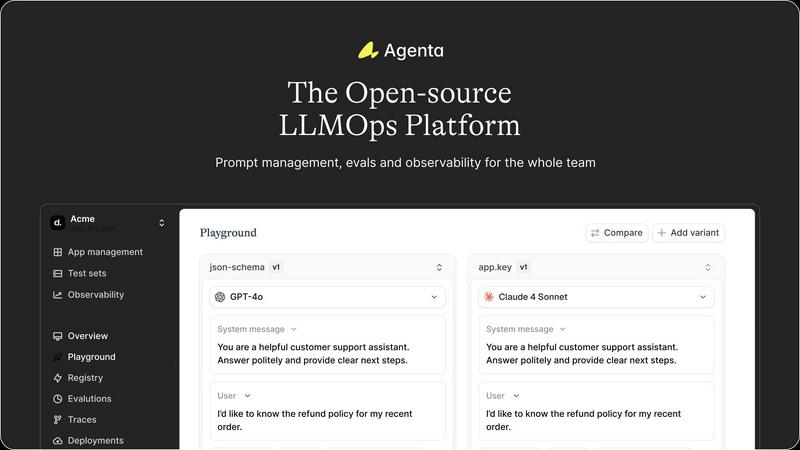

Unified Playground for Experimentation

Agenta includes a unified playground that enables teams to compare prompts and models side-by-side. This feature supports iterative development by allowing teams to test and refine their prompts in a controlled environment.

Comprehensive Observability Tools

With built-in observability tools, Agenta offers the ability to trace every request and identify exact failure points. The platform facilitates annotation of traces, enabling teams to gather user feedback and turn any trace into a test with a single click.

qtrl.ai

Enterprise-Grade Test Management

This feature provides a structured foundation for all quality activities. It offers a centralized repository for test cases, plans, and runs, ensuring everything is organized and accessible. Full traceability links tests back to requirements, and detailed audit trails are maintained for compliance. It supports both manual and automated workflows, giving teams the flexibility to manage quality in a way that fits their current process while preparing for more advanced automation.

Progressive AI Automation

Instead of a sudden, all-or-nothing approach, qtrl.ai introduces automation progressively. Teams begin by writing high-level test instructions in plain English. When ready, they can leverage AI to generate detailed test scripts from those instructions. The AI also suggests new tests based on coverage gaps. Crucially, every AI-generated step is fully reviewable and approvable by a human, maintaining oversight and ensuring tests align with team expectations before execution.

Autonomous QA Agents

These are intelligent executors that operate within defined rules. They can run tests on demand or continuously across multiple real browsers and environments, such as development, staging, and production. The agents execute instructions precisely, providing real browser interaction rather than simulations. They operate with permissioned autonomy levels, meaning their actions are transparent and controllable, never making unpredictable "black-box" decisions.

Adaptive Memory & Multi-Environment Execution

The platform builds a living knowledge base of your application by learning from exploration, test execution, and discovered issues. This context makes test generation smarter over time. Coupled with robust multi-environment execution, teams can run tests across any stage of the development lifecycle. The system securely manages per-environment variables and encrypted secrets, which are never exposed to the AI agent, ensuring security and consistency.

Use Cases

Agenta

Collaborative Prompt Development

Agenta is ideal for collaborative prompt development, where product managers, developers, and domain experts can work together to iterate and refine prompts. This collaboration leads to more robust and effective LLM applications.

Performance Monitoring and Debugging

AI teams can utilize Agenta to monitor production systems and detect regressions in real time. With its observability features, teams can quickly identify and address performance issues, enhancing the reliability of their applications.

Structured Experimentation

Agenta provides a structured environment for experimentation. Teams can run side-by-side comparisons of different models and prompts, allowing them to make data-driven decisions based on systematic evaluations.

Integration with Existing Workflows

Agenta seamlessly integrates with popular frameworks like LangChain and OpenAI, making it easy to incorporate into existing workflows. This ensures that teams can leverage their current tools while benefiting from Agenta's structured approach to LLM development.

qtrl.ai

Scaling Beyond Manual Testing

For QA teams overwhelmed by repetitive manual test cycles, qtrl.ai provides a clear path forward. Teams can start by structuring their existing manual tests in the platform. Then, they can progressively automate the most tedious and high-value test cases using AI-generated scripts, freeing up human testers for more complex exploratory work and significantly increasing test coverage and execution speed.

Modernizing Legacy QA Workflows

Companies relying on outdated, siloed, or spreadsheet-based test management systems can consolidate their entire QA process into qtrl.ai. The platform brings test management, automation, and execution into a single, governed system. This modernization provides immediate benefits like real-time dashboards, audit trails, and traceability, while setting the stage for intelligent automation without a disruptive overhaul.

Governing Enterprise AI Testing

Enterprises with strict compliance, security, and governance requirements can safely adopt AI for testing with qtrl.ai. The platform's design ensures full visibility into all AI agent activities, maintains detailed audit trails, and keeps human oversight at the center. Teams can grant autonomy gradually, ensuring the AI operates within strict guardrails and corporate policies, making it a trustworthy solution for regulated industries.

Enhancing Product-Led Engineering

Product-led engineering teams that need to move fast without breaking things can integrate qtrl.ai into their CI/CD pipelines. The platform supports continuous quality feedback loops, allowing teams to run automated test suites against every build. AI agents can be tasked with verifying new features or conducting regression tests, providing rapid feedback and ensuring quality keeps pace with development velocity.

Overview

About Agenta

Agenta is an open-source LLMOps platform tailored for AI teams that aim to develop and deploy reliable large language model (LLM) applications. Designed to bridge the communication gap between developers and subject matter experts, Agenta creates a collaborative workspace that facilitates experimentation with prompts, performance evaluation, and effective debugging of production issues. The platform addresses significant challenges faced by AI teams, including the inherent unpredictability of LLMs and the disjointed workflows that often occur across various tools. By centralizing the entire LLM development process, Agenta enhances team productivity and significantly reduces the time typically spent on debugging. With a structured approach to LLM development, Agenta empowers teams to adhere to best practices, streamline their workflows, and ultimately deliver high-quality LLM applications more efficiently. Whether you are a developer, product manager, or domain expert, Agenta provides the tools necessary for effective collaboration and innovation in AI development.

About qtrl.ai

qtrl.ai is a modern QA platform designed to help software development teams scale their quality assurance efforts effectively. At its core, it addresses a fundamental challenge: the trade-off between speed and control. Many teams are caught between slow, unscalable manual testing and complex, brittle traditional automation tools. qtrl.ai provides a structured solution by combining enterprise-grade test management with intelligent, trustworthy AI automation. This creates a centralized hub where teams can organize test cases, plan test runs, trace requirements, and track quality metrics through real-time dashboards. The platform is built for progression, allowing teams to start with simple manual test management and gradually introduce AI-powered automation as they become comfortable. This makes it an ideal fit for product-led engineering teams, QA groups moving beyond manual processes, companies modernizing legacy workflows, and enterprises that require strict compliance and audit trails. Ultimately, qtrl.ai's mission is to bridge the gap, offering a controlled, transparent path to faster and more intelligent quality assurance without the risks associated with unpredictable "black-box" AI solutions.

Frequently Asked Questions

Agenta FAQ

What is LLMOps and how does Agenta fit into it?

LLMOps refers to the practices and tools used to manage the lifecycle of large language models. Agenta fits into this by providing a structured platform that centralizes prompt management, evaluation, and observability, streamlining the LLM development process.

How does Agenta facilitate collaboration among team members?

Agenta fosters collaboration by allowing product managers, developers, and domain experts to work together in one unified platform. It provides tools for prompt iteration, evaluation, and debugging, enabling real-time collaboration and feedback.

Can Agenta be integrated with other AI frameworks?

Yes, Agenta is designed to integrate seamlessly with various AI frameworks, including LangChain and OpenAI. This flexibility allows teams to utilize Agenta alongside their existing tools without disruption.

What kind of support does Agenta provide for debugging?

Agenta offers comprehensive observability tools that trace requests and identify failure points. This allows teams to annotate traces, gather feedback, and quickly debug issues, reducing the time and effort spent on troubleshooting.

qtrl.ai FAQ

How does qtrl.ai's AI differ from other "autonomous" testing tools?

qtrl.ai avoids a risky "black-box" approach. Its AI is designed for transparency and control. It does not make unpredictable decisions. Instead, it generates test steps from human instructions, which must be reviewed and approved before execution. You define the rules and level of autonomy. This progressive, governed model ensures the AI assists your team reliably and builds trust over time.

Can we use qtrl.ai if we are not ready for full AI automation?

Absolutely. qtrl.ai is built for progression. You can start by using it solely as a powerful test management platform to organize manual test cases, plans, and runs. When your team is ready, you can begin experimenting with AI-generated test creation for specific scenarios. The platform adapts to your pace, allowing you to increase automation gradually without any pressure to change your entire workflow overnight.

How does qtrl.ai handle security and sensitive data?

Security is a foundational principle. qtrl.ai offers enterprise-ready security measures. For automation, you can define environment-specific variables and encrypted secrets (like passwords or API keys). These secrets are never exposed to the AI agent during test execution. The platform provides full audit trails and is built to support compliance requirements, giving you control over your data and test assets.

Does qtrl.ai work with our existing development tools?

Yes, qtrl.ai is designed to integrate into real-world workflows. It offers requirements management integration, CI/CD pipeline support, and is built to work alongside your existing toolset. The goal is to enhance your current process, not replace it entirely. This allows teams to incorporate structured test management and intelligent automation without disrupting their established development lifecycle.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform that centralizes the management, evaluation, and debugging of large language models (LLMs) for AI teams. It addresses the unique challenges faced by developers and subject matter experts in creating reliable AI applications. Users often seek alternatives due to various reasons, including pricing, specific feature sets, or compatibility with their existing workflows and platforms. When choosing an alternative, it is essential to consider factors such as ease of use, integration capabilities, support options, and the overall effectiveness in enhancing LLM development processes.

qtrl.ai Alternatives

qtrl.ai is a modern quality assurance platform in the automation and developer tools category. It helps software teams scale their testing efforts by combining structured test management with intelligent AI agents. This approach allows teams to maintain full control and governance while gradually introducing automation. Users often explore alternatives for various reasons. Common considerations include budget constraints, the need for specific features not offered, or a requirement to integrate with a different set of existing development tools. The specific needs of a team's workflow and application stack are also key factors. When evaluating any alternative, it's wise to look at the core capabilities. Consider the balance between manual test organization and automated execution. Assess how the tool handles test maintenance and reporting. Finally, evaluate the level of control and transparency the platform offers, especially when it involves AI-driven features.